AWS CodeCommit CodeBuild and CodePipeline…Oh My

At my day job we’ve leveraged Azure DevOps to handle our Continuous Integration and Continuous Deployment needs and for the most part it is a really well done tool. Nearly all tasks can be defined as code in a single YAML file that is easy to track changes and having the integrated ticket/kanban board is a huge bonus so one can immediately see when certain changes were applied, which erases ambiguity and confusion. I’ve also used jenkins and gitlab to some extent at past gigs, which all had pluses and minuses, but I feel like I have a pretty good grasp on what developers and operators require in a CI/CD pipeline.

As a lazy soul and DevOps engineer, I’ve gotten tired of manually building and syncing the changes for this website to the S3 bucket which hosts the content you are viewing. My goal was to automate the hugo build and upload to S3 for this very website. A perfect opportunity and chance to throw together some automation to handle these tasks for me. For me I needed 3 features or tasks in my pipeline:

- A place to push code to/A git repo

- A way to build the website/Continous Integration

- A way to deploy the rendered output to an S3 bucket/Continous Deployment

Earlier this week, as the title suggests, I decided to take a gander at AWS’s offerings which are comprised of 4 maybe 5 services - AWS CodeCommit, AWS CodeBuild, AWS CodeDeploy, AWS CodePipeline, and AWS CodeStar. Each service does what it sounds, but much of the cookie cutter templates would prove to not work for me and hugo so AWS CodeStar was out immediately.

AWS CodeCommit

AWS CodeCommit proved to be the most straight forward thing to setup. If you’ve ever started a repo on github or any hosted git solution, they all work the same and this one is no different. HTTPS and SSH authentication/keys are all managed with your IAM user account, which is nice to not have a separate place to manage access and authentication.

The Service claims to be free for 5 or less users so a perfect tool for very small teams or personal projects, even if all you are looking for is a code backup solution.

My own negative for CodeCommit is that there’s no issue tracker, seems like they could setup a CodeTracker service and I’d think AWS could at least compete with Azure DevOps, but as it stands it’s just not a fully realized suite of tools yet. A great tool for personal projects, but not for teams.

AWS CodeBuild

AWS CodeBuild allows you, through a series of click through menus, to define an environment which can run a build process on a container or EC2 instance and output a file or folder to S3 or some other destination for storage.

This took the most time to get right, mostly because I was used to the way Azure DevOps handles Build tasks. In AZDO you can define everything; environment, server type (linux vs windows), steps, tasks, jobs, and integrate with tons of plugins for different services ALL in a single YAML file. What you can define in the buildspec.yml file for CodeBuild is only the task steps that will happen inside the build agent/runner.

Below is the buildspec.yml file for building a site with Hugo

version: 0.2

phases:

install:

commands:

- echo Install Start

- apt-get -qq update && apt-get -qq install curl

- apt-get -qq install asciidoctor

- curl -s -L https://github.com/gohugoio/hugo/releases/download/v0.67.1/hugo_0.67.1_Linux-64bit.deb -o hugo.deb

- dpkg -i hugo.deb

finally:

- echo Install Finished

build:

commands:

- echo Build Start

- cd $CODEBUILD_SRC_DIR

- rm -f buildspec.yml && rm -rf .git && rm -f README.md

- hugo --quiet

finally:

- echo Build Finished

artifacts:

files:

- '**/*'

base-directory: $CODEBUILD_SRC_DIR/public/

discard-paths: no

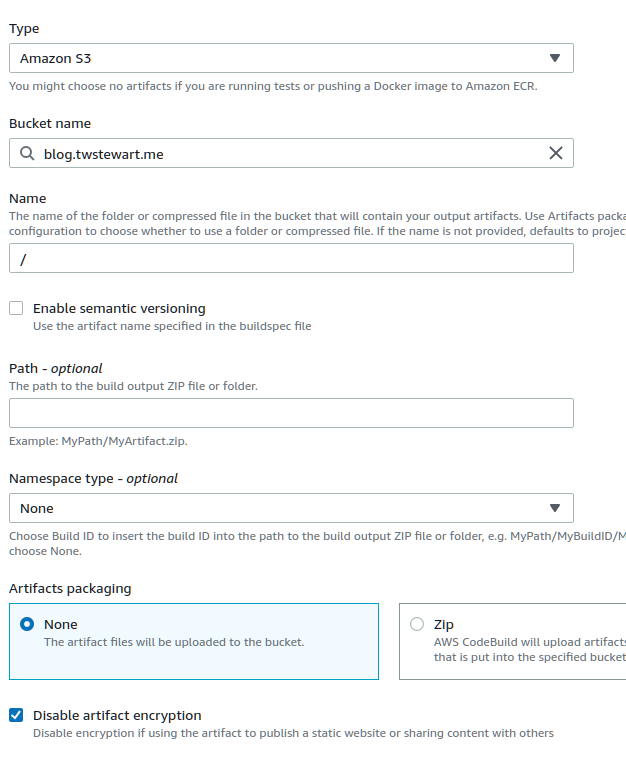

The Publish/Artifact Section gave me a lot of trouble. The S3 bucket which hosts the website has all the content at / and luckily there was some miscellaneous forum posts which steered me in the right direction.

- Name needs to be /

- Disable artifact encryption needs to be checked for the files to be readable to folks like you.

As I got that solved, I had everything I thought I needed, the git repo, the build process, and the file delivery to S3. A short lived victory, until I discovered that there is no way to auto-trigger CodeBuild from CodeCommit, you can run them manually, but that’s not the DevOps way. Nay, the only way to automagically start a build process is through CodePipeline.

AWS CodePipeline

The essential use of CodePipeline is to unify all the other CodeBlah services into one final grouping and be the missing automation part that so many of us seek.

- Source = Match to correct repo in AWS CodeCommit

- Build = Match to the correct Build process in AWS CodeBuild

- Deploy = ??? Re-define the Deploy to S3 but more confusingly

The question marks are from me, in my Build process it was delivering the output to my desired bucket. Apparently when ran through Pipelines, the Pipeline ignores those settings and will upload the artifact as a zipped up package to a secondary S3 bucket. Then you have the joy of setting up a third Deploy step of the pipeline to get the Build Artifact and push the output into the intended final destination.

If you are confused, because it seems like we already did that step in the Build process and now you have to set it up again, you’d be correct in that feeling.

CodePipeline also charges $1/per month for an active (ran at least once) pipeline.

Oh My

Overall I think there is good value to gain from having a semi-unified suite of tools to handle CI/CD. Far better than a spattering of separate Git service, Jenkins for CI/CD, and JIRA or Trello for project tasks/issue tracking. I do wish AWS would streamline a few of these features and not make them separate services, maybe for larger projects the complexity is justified, but for my experiment it felt overkill. Also if they dug in and made a project management/issue tracking service (even if it’s a github clone) that integrates with all of them, I suspect more teams would be willing to overcome some of the rougher edges of these services in order to have everything in AWS vs using another third party service be that Azure DevOps or the Atlassian suite of tools for the same.